Current location:

Home > News > Company News > Rising Token Demand: How Gooxi SY6108G-G4 Meets the AI Compute ChallengeRising Token Demand: How Gooxi SY6108G-G4 Meets the AI Compute Challenge

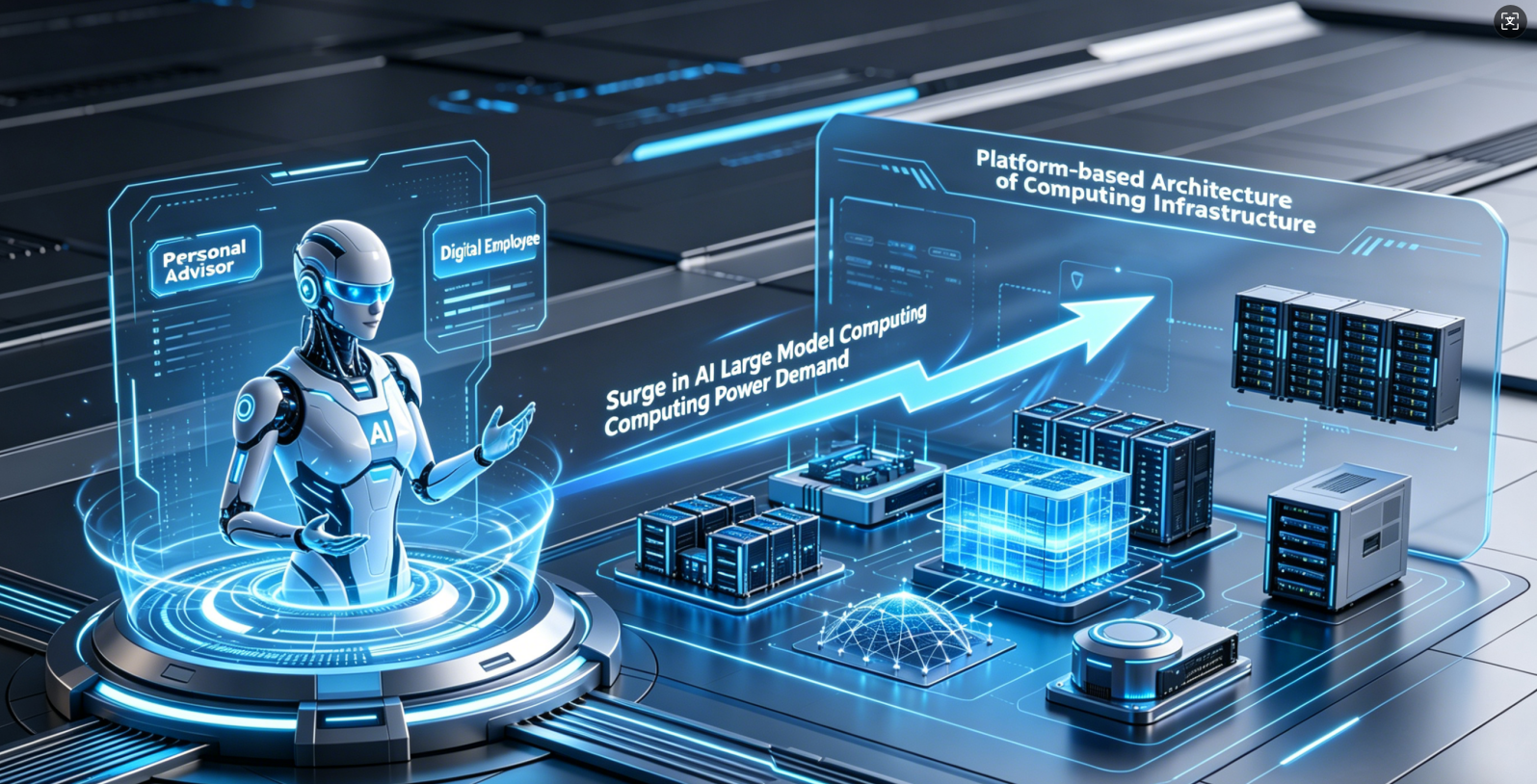

AI is rapidly moving beyond experimentation into large-scale commercial deployment. Driven by breakthroughs in AI agents and signals from industry events like GTC 2026, AI is evolving from a “digital assistant” into a true “digital workforce.” This shift is placing unprecedented pressure on computing infrastructure, which is now entering a new phase of platform-level and system-level development.

AI agents can autonomously perform complex tasks such as coding and debugging—but at a cost. A single task may consume 10 to 100 times more tokens than traditional chatbot interactions. At the same time, the rapid adoption of multimodal applications such as text-to-image and text-to-video is further amplifying compute demand. Even a simple page request can now require orders of magnitude more computing resources than before.

As a result, token consumption is surging. By March 2026, daily token usage for large AI models in China had exceeded 140 trillion—representing exponential growth compared to early 2024. The widespread adoption of AI agents and multimodal models continues to drive this demand, making scalable computing infrastructure more critical than ever.

To address this surge in compute demand, Gooxi introduces the SY6108G-G4 AI Server—designed to support large-scale model deployment and high-throughput inference with performance, flexibility, and reliability.

SY6108G-G4 supports dual 4th/5th Gen Intel® Xeon® Scalable processors, with up to 385W TDP per CPU, delivering strong compute capability for large-scale parallel processing. This enables faster token handling and reduced latency per request.

Equipped with 32 DDR5 memory slots (up to 5600 MT/s), the system significantly increases memory bandwidth, supporting long-context processing and high-concurrency workloads typical of large model inference.

With support for up to 8 double-width 600W GPUs and direct CPU-GPU connectivity, the system minimizes communication latency and enables high-throughput performance. A single system can support massive concurrent requests with real-time response, making it well-suited for AI agents, multimodal processing, and model fine-tuning.

AI applications vary widely in deployment requirements, from centralized data centers to distributed edge environments.

SY6108G-G4 provides 13 PCIe expansion slots, allowing flexible configuration of network and accelerator cards. This enables scalable infrastructure design, from low-frequency workloads to large-scale, high-concurrency token processing.

The system supports hybrid storage configurations (SATA/SAS/NVMe), with up to 12 front-access drives (including NVMe), along with built-in M.2 and Slimline options. This design balances high-speed caching and large-capacity storage, improving data throughput and overall system efficiency for AI workloads.

As AI systems are increasingly deployed in mission-critical industries such as finance, government, and energy, infrastructure reliability becomes essential.

The SY6108G-G4 adopts a 6U design with comprehensive reliability features across power, cooling, and security:

As AI continues to scale, computing infrastructure will remain the foundation of innovation.

Gooxi continues to focus on AI computing, delivering high-performance, reliable, and scalable solutions tailored to large model workloads. By working closely with ecosystem partners, Gooxi enables organizations to accelerate AI deployment and unlock real-world value across industries.

Leading Provider of Server Solutions

YouTube